In the current landscape of technology, the role of a software professional is undergoing a massive transformation. For a long time, building applications was the primary focus. However, we have entered an era where the data itself is the product. Having spent two decades watching the industry move from localized databases to massive, distributed cloud systems, I can tell you that the most important skill today isn’t just writing code—it’s managing the flow of information.

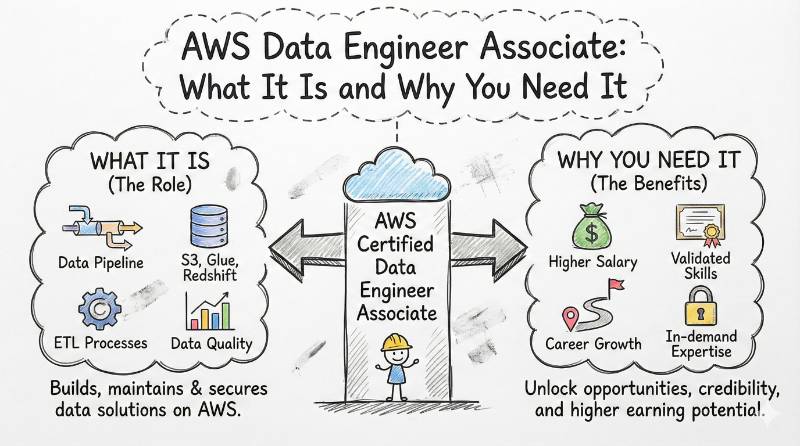

The AWS Certified Data Engineer – Associate is more than just a certificate; it is a professional roadmap. It signals that you understand how to build the “digital plumbing” that allows businesses to make decisions in real-time. For engineers and managers in India and across the global market, this credential is the key to moving from a generalist role into a high-impact, specialized career. This guide will walk you through every detail of this program and show you how to master the AWS data ecosystem.

AWS Certified Data Engineer Associate: Comprehensive Overview

To understand where this certification fits in your career, look at the technical breakdown below. This table helps you visualize the requirements and the core skills you will be validated on.

| Track | Level | Who it’s for | Prerequisites | Skills Covered | Recommended Order |

| Data Operations | Associate | Software Eng, Data Eng, Managers | 1-2 years cloud data experience | Ingestion, ETL, Security, Data Lakes | After Solutions Architect Associate |

AWS Certified Data Engineer – Associate

What it is

The AWS Certified Data Engineer – Associate (DEA-C01) is a specialized certification designed to prove your ability to design, build, and maintain data systems on the Amazon Web Services platform. While other certifications focus on general cloud design, this one focuses strictly on the “Data Lifecycle.” It tests your knowledge of how to collect data from different sources, how to clean it using automated tools, and how to store it so it is easy to find but cheap to keep. It is a technical benchmark that proves you can handle both “batch” data (large chunks of old data) and “streaming” data (information moving in real-time).

Who should take it

This path is built for Software Engineers who want to pivot into data-heavy roles where the pay and demand are significantly higher. It is also perfect for ETL Developers who are tired of using old-school, on-premise tools and want to master the cloud. Finally, Engineering Managers should take this training to understand the technical hurdles their teams face. If you are responsible for the health and security of a company’s data, this is the training you need.

Skills you’ll gain

Preparing for this exam will change how you view software architecture. You will move away from thinking about single servers and start thinking about massive data ecosystems.

- High-Volume Ingestion: You will master how to pull data from thousands of sources at once using tools like Amazon Kinesis and AWS Glue. This ensures the data is ready for the business to use immediately.

- Complex Data Transformation: You will learn how to use serverless tools to clean and reorganize data without having to manage actual servers. This includes mastering AWS Glue and Amazon EMR.

- Storage and Cost Efficiency: One of the most important skills is knowing where to put data. You will learn to use S3, Amazon Redshift, and DynamoDB in a way that provides top performance without wasting the company’s budget.

- Security and Governance: Data is a target for attacks. You will learn how to use AWS Lake Formation and KMS encryption to ensure that only the right people have access to the right information at the right time.

- Pipeline Automation: You will gain the ability to use AWS Step Functions to connect different data tasks into one smooth, automated workflow that requires zero manual effort.

Real-world projects you should be able to do

After completing this training, you will have the practical skills to handle real engineering challenges that modern companies face.

- Real-Time Clickstream Analytics: Build a system that captures every click a user makes on a website, processes that data instantly using AWS Lambda, and shows the results on a business dashboard in seconds.

- Serverless Data Lake Construction: Design a storage system on S3 that automatically sorts raw data into “Clean” and “Analysis-ready” folders using AWS Glue crawlers and jobs.

- Multi-Account Security Hub: Set up a centralized governance layer that allows you to manage data permissions across different departments or even different countries from one single console.

- Enterprise-Scale Data Migration: Lead a project to move an old, slow on-premise SQL database into a modern, fast Amazon Redshift data warehouse with minimal downtime for the business.

Preparation Plan

Success in this exam depends on your study timeline. Here are three ways to approach it:

| Timeline | Action Strategy |

| 7–14 Days (The Sprint) | This is for those already working in AWS. Focus purely on your weak spots. Spend 80% of your time on AWS Glue and Redshift optimization. Take 5 mock exams to get used to the fast pace of the questions. |

| 30 Days (The Standard) | Weeks 1-2: Master data movement and storage (S3, Kinesis, Redshift). Week 3: Focus on processing and automation (Glue, Step Functions). Week 4: Deep dive into security and take 3-4 full practice tests. |

| 60 Days (The Deep Dive) | Recommended for software engineers new to the data world. Spend the first 30 days doing hands-on labs every day. Spend the second month mastering the theoretical concepts and tricky exam scenarios. |

Common Mistakes

Many talented engineers fail this exam because they miss the “hidden” requirements of the cloud.

- Focusing Only on Movement: It is a mistake to only worry about moving data. A large part of the exam is about security. If you don’t understand how IAM roles and bucket policies work together, you will likely fail the security section.

- Ignoring the Cloud Bill: AWS wants you to build systems that save money. On the exam, if there are two ways to solve a problem, the cheaper one is almost always the correct answer.

- Not Learning the Code: While you don’t need to be a Python expert, you must be able to read and understand the basic Spark or Python scripts used in AWS Glue.

- Poor Data Organization: Setting up a “Data Lake” on S3 without clear folders (partitioning) makes it slow and expensive to search. You must learn the logical rules for organizing cloud data.

Choose Your Path: 6 Specialized Learning Tracks

This certification is a versatile building block. Depending on your career goals, you can branch out into these six areas:

- DevOps: Use your data knowledge to automate the infrastructure that supports massive applications. You will ensure that the “pipes” are always working and that deployments are fast.

- DevSecOps: Make data protection your specialty. Since data is a primary target for hackers, you will learn to build security and encryption directly into every automated pipeline.

- SRE (Site Reliability Engineering): Focus on the uptime of the data system. You will build self-healing pipelines that can automatically fix themselves if they stop working.

- AIOps/MLOps: Become the expert who prepares the data for AI models. Without high-quality data engineering, modern artificial intelligence projects cannot succeed.

- DataOps: This is the core domain for this certification. You will focus on the speed and quality of data delivery across the whole company, ensuring that data is always fresh and accurate.

- FinOps: Focus on the financial health of the cloud. You will use your understanding of storage and compute to keep the cloud bill as low as possible while maintaining high performance.

Role → Recommended Certifications Mapping

To help you plan your next steps, here is how different roles should stack their certifications.

| Your Current Role | Primary Certification | Secondary/Support Certs |

| Data Engineer | AWS Data Engineer Assoc. | AWS Solutions Architect Assoc. |

| DevOps Engineer | AWS DevOps Engineer Prof. | AWS Developer Assoc. |

| SRE | AWS SysOps Admin Assoc. | AWS DevOps Engineer Prof. |

| Platform Engineer | AWS Solutions Architect Prof. | CKA (Certified Kubernetes Admin) |

| Security Engineer | AWS Security Specialty | AWS Solutions Architect Assoc. |

| Cloud Engineer | AWS Solutions Architect Assoc. | AWS SysOps Admin Assoc. |

| FinOps Practitioner | AWS Cloud Practitioner | FinOps Certified Practitioner |

| Engineering Manager | AWS Cloud Practitioner | AWS Solutions Architect Assoc. |

Next Certifications to Take (Top 3 Options)

Once you have mastered the Data Engineer Associate, these are the best options for your next career move:

- Option 1 (Same Track): AWS Certified Machine Learning – Associate. This allows you to not just move the data, but build the AI models that use it. It is a natural evolution for a data engineer.

- Option 2 (Cross-Track): AWS Certified Solutions Architect – Associate. This provides a broader view of how data services interact with networking and general cloud design.

- Option 3 (Leadership): PMP (Project Management Professional). For those looking to move into management, this bridges the gap between technical work and business strategy.

Top Institutions for AWS Data Engineer Training

If you are looking for professional help to pass your certification, these institutions are highly recommended for their specialized approach:

- DevOpsSchool: This is a premier institution for those who want instructor-led training. They focus on real-world projects and provide a very hands-on experience that helps you understand the “why” behind every service.

- Cotocus: They specialize in technical training for corporate teams, helping individuals bridge the gap between classroom theory and actual industry work in the cloud data space.

- Scmgalaxy: This institution offers training that covers the entire software life cycle, helping data engineers understand how their work fits into the bigger picture of DevOps.

- BestDevOps: Focuses on quick upskilling, helping you learn the most important AWS data tools through structured and easy-to-follow modules.

- devsecopsschool: If you want to specialize in protecting data, this is the place. Their courses emphasize security, encryption, and compliance within the AWS cloud.

- sreschool: Their curriculum is built around reliability and scalability, teaching you how to build data systems that can handle massive amounts of traffic without failing.

- aiopsschool: This school focuses on the future of operations, teaching you how data pipelines are essential for modern AI and machine learning workflows.

- dataopsschool: A specialized institution dedicated to the DataOps domain, providing focused training on the entire journey of data from collection to delivery.

- finopsschool: This school teaches the vital skill of cloud financial management, ensuring you can build powerful data systems that stay within the company’s budget.

FAQs : Career, Value, and Strategy

1. How difficult is the AWS Data Engineer Associate exam compared to others? It is more technically narrow but much deeper than the Solutions Architect exam. You need to understand exactly how services like Glue and Redshift work, rather than just knowing what they are.

2. How much time do I need to commit to passing this? If you already work in the cloud, 40-60 hours of focused study is usually enough. For those new to data engineering, plan for 100+ hours to include enough hands-on lab time.

3. Are there any mandatory prerequisites for the exam? No. You can take this exam without having any other certifications. However, understanding the basics of the cloud (Cloud Practitioner level) is very helpful.

4. What is the best sequence for taking AWS certifications? The ideal path is: Cloud Practitioner -> Solutions Architect Associate -> Data Engineer Associate. This builds your knowledge step-by-step so you don’t feel overwhelmed.

5. Does this certification hold value for managers? Yes. It gives managers the technical vocabulary they need to lead teams effectively, plan project timelines accurately, and make better budget choices.

6. What are the career outcomes after getting certified? Professionals often see a shift toward higher-paying roles like Senior Data Engineer or Analytics Lead. It is a major signal to recruiters that you have specialized, high-demand skills.

7. How long is the certification valid? It lasts for three years. To keep it active, you can either retake the latest version of the exam or move up to a Professional-level certification.

8. Is this better than the old AWS Certified Data Analytics – Specialty? Yes, because it focuses on the engineering—the actual building of the systems—which is what the industry needs most right now. It is the modern standard.

9. Can a regular Software Developer switch to Data Engineering using this? Absolutely. This certification is designed to teach developers how to apply their coding skills to manage large amounts of data in the cloud.

10. How does this help with global job opportunities? AWS certifications are recognized all over the world. Having this credential makes it much easier to pass technical screenings for roles in the US, Europe, or the Middle East.

11. What is the passing score for the exam? The exam is scored from 100 to 1,000. You need a minimum score of 720 to pass.

12. Is there a lab portion in the actual exam? Currently, the exam is multiple-choice. However, the questions are based on real-world scenarios, so you cannot pass without having actual hands-on experience.

FAQs : Technical Training & Exam Content

1. Which AWS service is the most important to learn for this exam? AWS Glue is the star of the exam. You must understand the Data Catalog, Crawlers, and how to use Glue for cleaning and moving data.

2. Do I need to be an expert in Python to pass? No, but you should be able to read and understand basic Python or Spark code, as you will see these in questions about Glue and Lambda.

3. How much focus is there on “Streaming” data? Quite a lot. You will need to know when to use Kinesis Data Streams for low-latency processing and when to use Firehose for delivering data to storage.

4. Does the training cover SQL? Yes. You should be comfortable using SQL to query data in Amazon Athena and to perform basic tasks in Amazon Redshift.

5. What is the role of “Data Lakes” in this certification? Data Lakes (using S3 and Lake Formation) are a central part of the exam. You will be tested on how to store data securely and how to manage access.

6. Is cost management a major part of the training? Yes. You will learn how to choose the right storage tiers (like S3 Intelligent-Tiering) and how to optimize your queries so they don’t cost too much.

7. How are security and compliance handled in the training? The exam covers “Security by Design.” This includes using KMS for encryption and setting up IAM roles so different services can talk to each other safely.

8. What kind of automation tools are covered? The focus is on AWS Step Functions for serverless automation and Managed Airflow (MWAA) for more complex, code-based data workflows.

Conclusion

The evolution toward data-centric business is not just a trend; it is the new standard for the global economy. By earning the AWS Certified Data Engineer – Associate certification, you are doing more than just adding a logo to your resume. You are proving that you can architect and manage the systems that modern decision-making depends on. Whether you are an engineer looking to specialize or a manager trying to better understand your team’s technical work, this training provides the depth needed to build secure, scalable, and efficient data platforms. The cloud is built on data, and now is the time to ensure you have the skills to lead the way in this field. Investing in your education today is the surest path to staying relevant in an ever-changing industry.